When AI Models Change Underneath You

AI models update on schedules you don't control. Building reliable systems on shifting foundations is the new normal, and it requires a different mindset.

Tim Clark

Co-founder · 25 March 2026 · 4 min read

TL;DR

Unlike traditional software, AI models get updated by someone else on schedules you don't control. Your prompts and automations can drift without warning. The businesses that thrive with AI are the ones who build with the expectation of change and have ways of noticing when things shift.

There’s something about AI that’s different from other technology we’ve worked with, and I’ve been turning it over in my mind for a while now.

With traditional software, when something changes, you know about it. There’s a release, a changelog, maybe a migration guide if it’s significant. Someone on your team made a decision to update, and if the update breaks something, you can trace back to that moment and figure out what happened.

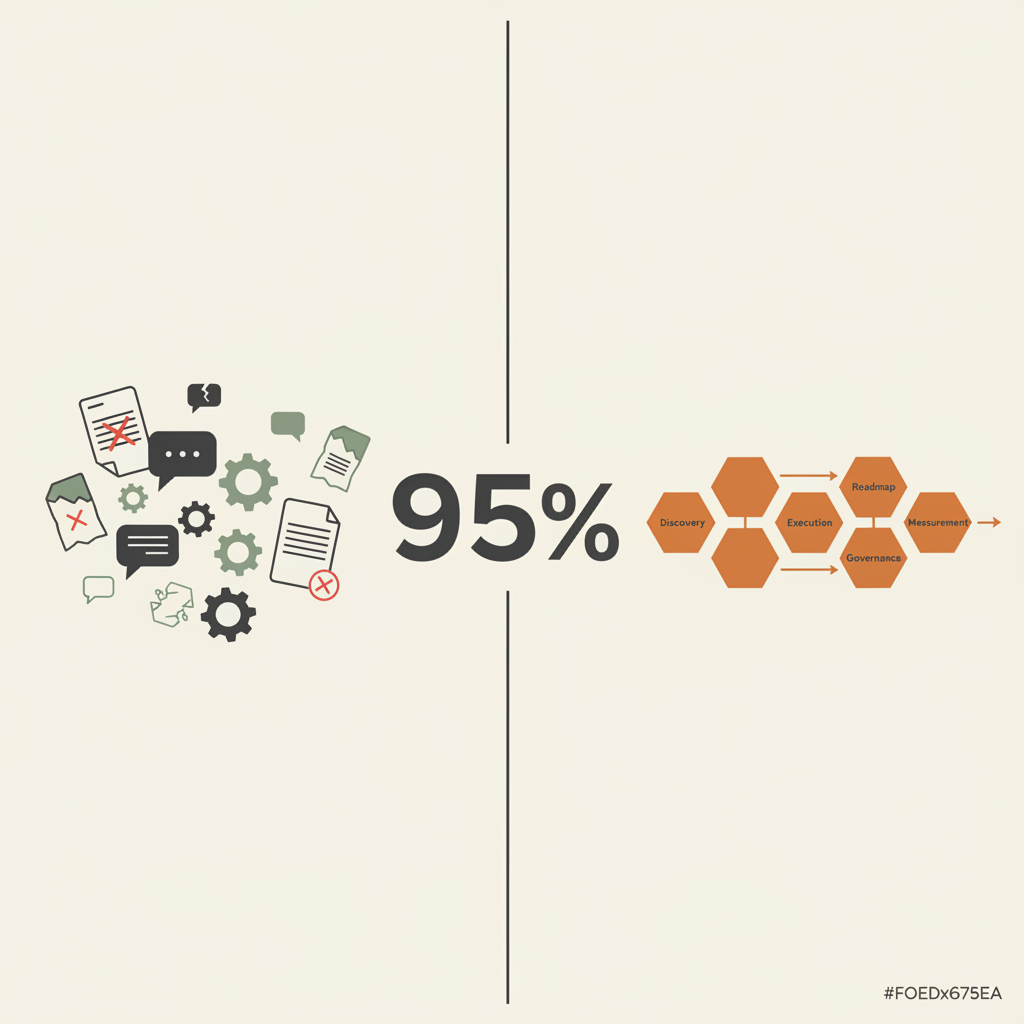

AI doesn’t work like that. In 2024 alone, OpenAI released over a dozen model updates, Google pushed five major Gemini versions, and Anthropic shipped three Claude generations. Each one subtly changed how existing integrations behaved.

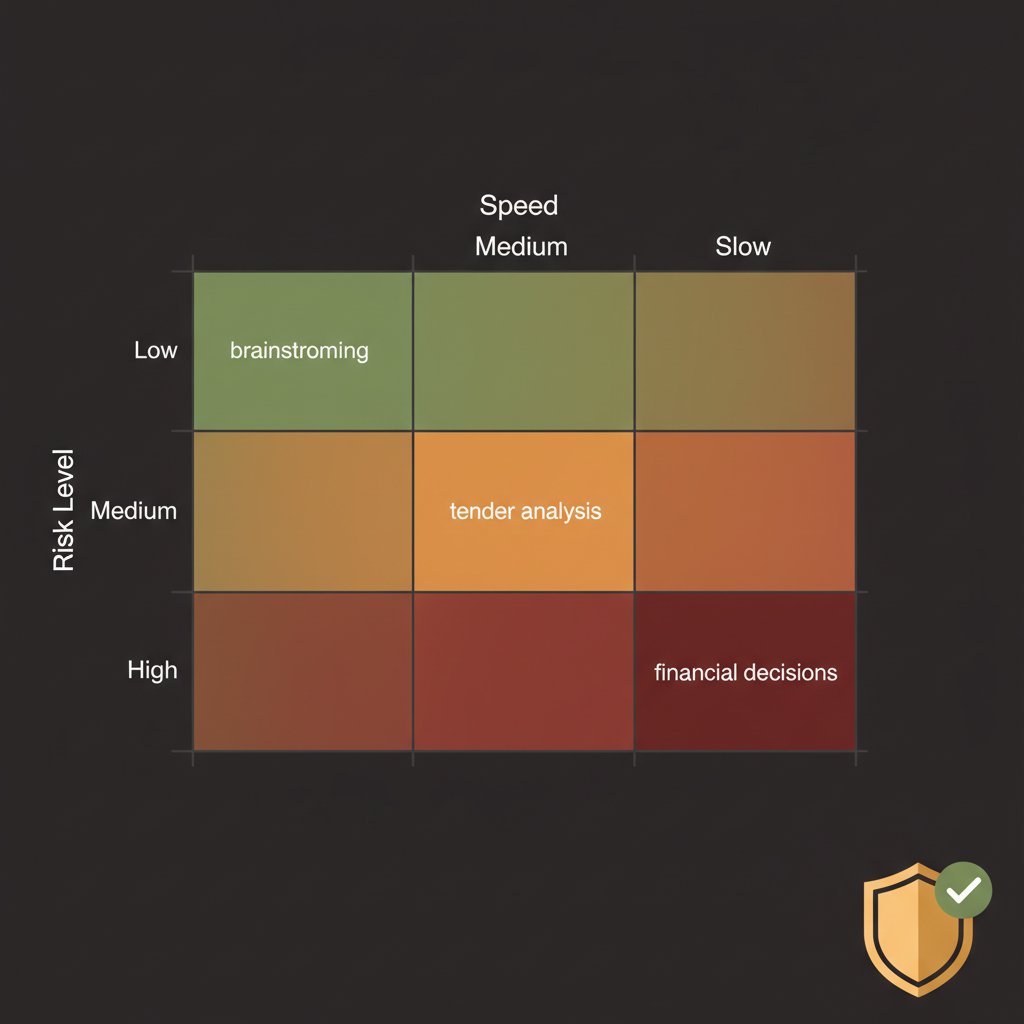

The models we build on top of are maintained by someone else entirely. They get updated on schedules we don’t control, for reasons that aren’t always explained, and the behaviour shifts in ways that might be subtle enough that you don’t notice immediately. Your prompt that worked beautifully for six months starts producing slightly different outputs. Your automation that handled a particular task reliably now misses edge cases it used to catch.

Nothing changed on your end. The foundation just moved a bit.

Your prompt that worked beautifully for six months starts producing slightly different outputs. Nothing changed on your end. The foundation just moved.

Building on shifting ground

I find this fascinating rather than alarming, because it means we need to think about AI systems differently from how we’ve thought about software before. It’s less like building on solid ground and more like building on something that’s slowly shifting. Not unstable exactly, but not static either.

The Gemini models have been an interesting example of this. Google iterates fast, new versions coming out regularly, each one slightly different from the last. If you built something tuned to the behaviour of one version, the next version might handle things differently. Usually better overall, but “better overall” doesn’t always mean “better for your specific use case.”

We’ve started thinking about this in terms of baselines. Before putting something into production, getting really clear on what good looks like. What outputs do we expect? What’s the acceptable range of variation? How would we know if something had drifted outside that range? It’s the kind of discipline that feels obvious once you’re doing it, but it took us a while to realise it was necessary.

Nobody else is watching this for you

The vendors aren’t going to tell you this stuff, not because they’re hiding anything but because they’re focused on capability. They want you excited about what the new version can do, not thinking about how it might affect what you’ve already built. That’s not unreasonable from their perspective. They’re building general-purpose tools for millions of users. Your specific implementation isn’t their concern.

Which means it has to be yours.

I’ve come to see this as just part of working with AI at this stage. The technology is moving fast, the models are improving constantly, and that improvement comes with change. Fighting that seems pointless. Better to build with the expectation that things will shift and have ways of noticing when they do.

A relationship, not an installation

It’s actually quite an interesting problem when you frame it that way. How do you build reliable systems on top of foundations that evolve? How do you maintain quality when the underlying capabilities keep changing? These aren’t solved problems. We’re all figuring it out as we go.

The people who’ll do well with AI in the long run, I suspect, are the ones who get comfortable with that. Not treating AI as a fixed tool that you configure once and forget about, but as something that needs ongoing attention. A relationship more than an installation.

Not treating AI as a fixed tool that you configure once and forget about, but as something that needs ongoing attention. A relationship more than an installation.

That might sound like more work, and it is. But it’s also more interesting. The tools keep getting better, which means the possibilities keep expanding. You just have to stay engaged with it.

Want to build AI systems that stay reliable as models evolve? Our AI Clarity Session helps you establish the baselines and monitoring that keep your AI working as intended. Or explore our real-world AI use cases to see how businesses are building with change in mind.